Nowadays, Power BI is used by many large organizations to collect information that helps their organization grow in the market. The increasing use of Power BI in such organizations, demands, mostly a development team that takes care of the reports’ development & deployment from A to Z. This demands the team to follow best practices of development and deployment which includes source control, multiple environments (i.e DEV, TEST, PROD, etc.), deployment processes, etc.

Since I have been working with Power BI for long, I could find no step-by-step guide or best practices to follow for the development & deployment of Power BI reports, just as there is Software Development Lifecycle. Generally, for SDLC, there are source control tools available that make maintenance of the code for different environments easier (through branching) and even visually merging & deleting of the code, post any changes with the current development code.

Some of you might be confused about the need to have a lifecycle for the Power BI reports.

“why on the earth would we need a lifecycle for Power BI report?”

I faced this issue while using shared Power BI datasets as a data source. Because just we can’t keep developing on the same dataset file which is used by the reports that are already deployed and consumed by users. Any small bug can lead to multiple errors in multiple reports. As long as I know, there is no tool/source control that compares two .pbix files, merges the changes and makes the development & deployment of the report easier for the team.

So, let’s try to understand that how having multiple environments can come to rescue in this case.

Generally, there is a practice of having 3 environments – DEV, TEST & PROD.

Let’s go through a scenario where the report is deployed with the same process as we deploy any code. So, when the development of the report is completed, we deploy it to the DEV environment where developers/QA team members test the report. Once the testing is completed, it is deployed to the TEST/UAT environment where the Client will test the report & give a sign off before deploying it to the production. If everything seems alright, we are ready to deploy the report to the PROD environment where end-users will start interacting with it. Creating 3 workspaces for these different environments and deploying the reports accordingly helps us in this simple scenario where there are no shared datasets and there are no multiple iterations simultaneously.

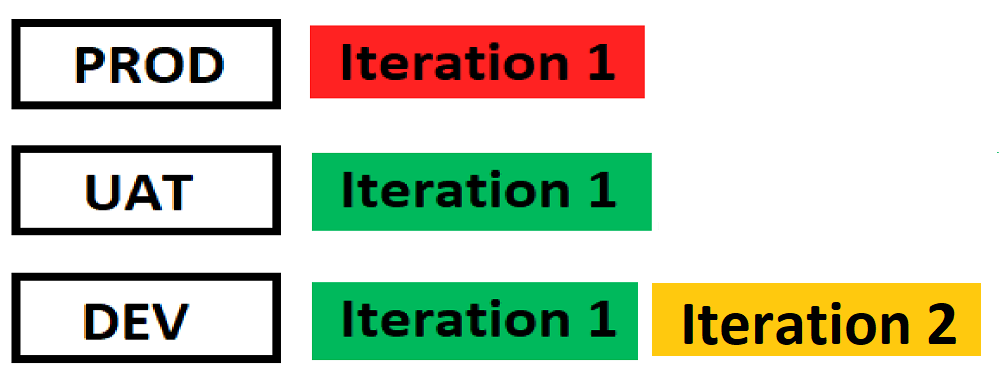

But what if the development of the reports is divided into iterations and we want to keep continuing with the development of the reports for the next iteration?

Suppose, we have started the development for Iteration 2. So, the changes for the Iteration 2 will be done in the same shared dataset that was used for creating reports in Iteration 1. Now say, while developing them, a bug is reported in the PROD environment for the reports in Iteration 1 which needs to be fixed urgently. We now cannot iterate back, undo the changes done for Iteration 2 in the dataset, fix the bug in DEV/TEST, and then again do changes for Iteration 2 as doing this would eat up a lot of time and effort. We need a better development lifecycle process to avoid such situations.

The current state explained in image below:

To curb this issue, below are a few obvious solutions –

1). We can fix the bug in the PROD dataset and then publish it. But it’s not advised to directly publish the changes to the PROD environment as the end-users can face more issues after the fix.

2). If the issue is not critical and can wait for the Iteration 2 to complete, we can then directly fix the bug in the development dataset and release the fix while deploying Iteration 2. But this approach could only be taken when the issue is not critical.

Hence, we have devised a solution that matches this scenario perfectly without taking much time and effort for the bug fixation & deployment.

The solution includes the use of two Power BI PRO accounts:

1). DEV account – For DEV & TEST environments

2). PROD account – For PROD environment

Note: We have purposefully kept the PROD account separate to avoid any accidental deployments into the PROD workspace.

The DEV account would have 4 workspaces named as DEV, DEV TEST, TEST & DEV PROD

The PROD account would have 1 workspace named as PROD

1). Reports are published in the DEV workspace of the DEV account. This will be used by the developer for Unit testing.

2). After the developers are done with the development of the reports, these reports will be moved to the DEV TEST workspace. This workspace will be used only by the QA team to test the reports.

3). After successful testing of the reports by the QA team in the DEV TEST workspace, these reports will be moved to the TEST workspace. This workspace will only be used for UAT.

4). After successful UAT testing, the reports will be moved to DEV PROD, where the QA team will test the updated dataset with the existing PROD environment reports.

5). And finally, if everything seems alright for all the reports in DEV PROD workspace, they are moved to PROD workspace in the other account (PROD account in this example). The reports in this workspace will be directly interacted by the end-users.

Note: The reports/datasets in (DEV TEST and TEST) & (DEV PROD and PROD) should always be in sync. Reports should always be published from DEV TEST ->TEST or DEV PROD->PROD, and it shouldn’t be the other way around (TEST->DEV TEST or PROD->DEV PROD)

Now, if bugs reported in the PROD environment, we will fix them in the DEV PROD dataset (as DEV PROD & PROD are always in sync), and after the successful testing by the developer, we’ll publish the report to PROD workspace.

Note: We will have to manually replicate these changes in the reports/datasets of DEV Workspace to ensure they get deployed in the next iteration too. Using this process, we’ll have a copy of report for every environment which could be used in case of accidental loss of work.

Bonus: To keep ‘DEV TEST & TEST’ and ‘DEV PROD & PROD’ environment in sync, you can write a Powershell script that will automatically copy the report from DEV TEST to TEST Workspace on a single click.

Please refer to the below link: Duplicate Workspace using Power BI REST API

We can also set the users with View Role in the PROD account so that they cannot edit the report, only see and test them.

1). No direct release of bug fixes in the PROD environment.

2). Avoid iterating back or reversing of the changes done.

3). Avoid messing up of changes in earlier Iterations.

4). We will always have a backup of the PROD workspace files into the DEV PROD workspace & TEST workspace files in the DEV TEST workspace.

This is the procedure/method that we came up with, the scenario that includes shared datasets and quick fix of the bugs. If anyone has a better & optimized solution than the proposed solution, please feel free to comment down. I will update the post accordingly which would help our fellow Power BI colleagues.

Please note that we have not used App Workspaces as these reports are going to be embedded and we can also use Azure File Storage or SharePoint to manage versions of each of our pbix file.

Happy Reporting!

© All Rights Reserved. Inkey IT Solutions Pvt. Ltd. 2024

[…] maintaining Power BI Report cycle, as discussed in one of our previous blogs. Please refer to this link if you have not looked at it […]

Hi

If we have two groups/departments (sales and accounts) we would have 10 workspaces

DEV Accounts

DEV TEST Accounts

TEST Accounts

DEV PROD Accounts

PROD Accounts

DEV Sales

DEV TEST Sales

TEST Sales

DEV PROD Sales

PROD Sales

That is correct!

I think we can do it with 4 workspaces only DEV,QA,Staging and PROD.

Process:

1.we can the use the Deployment Pipeline option available in Power bi and create a deployment pipeline and assign workspaces accordingly to 4 stages, for the first iteration the process will be straight forward like deploy from dev,qa,staging,prod and in second iteration the pbix file from qa which got signoff will be used for 2nd iteration development and will be published to dev as a filename-2nditeration after development, then will be moved to qa with same name and after signoff will be moved to staging and in staging this 2nd iteration report will replace the org one then will be deployed to prod.

2.In 2nd scenario,,If qa is testing the 2nd iteration report in qa and we get a bug in prod for 1st iteration report,in this case ,the bug wl be fixed in dev then moved to qa as bug fixed version (will not replace the org report or 2nd iteration report) and after qa testing will move to staging,here it will replace the org and deployed to prod.

with above type of deployment we can always have version backups in qa and can be used accordingly.

Hello Nayeenm,

Yes, you are correct. You can go through the blogs we have recently published for version controlling and managing the deployment process in a much more effective way using recent updates of Git integration and deployment pipeline. Here are links to the blogs that you go through.

https://www.inkeysolutions.com/blogs/empowering-pro-power-bi-developers-with-git-integration-in-power-bi-desktop/, https://www.inkeysolutions.com/blogs/streamlining-power-bi-deployment-pipelines/,

https://www.inkeysolutions.com/blogs/integrating-git-with-workspaces/.